Observability in Microservices

Observability in microservices centers on making system behavior visible through data. It relies on metrics, traces, logs, and events to expose how services perform and interact. Teams adopt proactive, data-driven practices to detect bottlenecks, failures, and capacity issues across boundaries. With resilient architectures and disciplined ownership, dashboards and dashboards evolve into actionable insights. The next steps reveal how to tie signals to reliability outcomes, inviting further exploration into production-ready patterns and practices.

What Is Observability in Microservices?

Observability in microservices is the ability to understand a system’s internal states based on its external outputs. It is defined by proactive monitoring, data-driven insights, and resilient architectures that reveal performance, failures, and dependencies. Building culture around transparency, disciplined experiments, and shared ownership shapes outcomes. Budget considerations influence tooling choices, scalability planning, and continuous improvement initiatives without compromising freedom.

Collecting the Core Signals: Metrics, Traces, Logs, and Events

Collecting the core signals—metrics, traces, logs, and events—provides the observable foundation for microservice systems. This data-driven posture enables proactive scaling dashboards, guiding capacity and reliability decisions as services evolve. A disciplined approach supports schema evolution, ensuring dashboards stay relevant. In this landscape, the winner takes all: robust telemetry, disciplined validation, and continuous refinement underpins resilient, freedom-focused architectures.

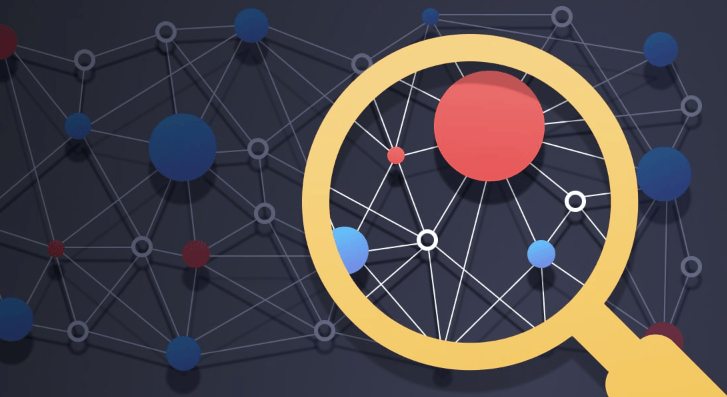

Troubleshooting Across Boundaries: Tracing Dependencies and Bottlenecks

How can teams illuminate cross-service performance when boundaries blur? The analysis tracks latency visualization, dependency maps, and bottleneck forecasting across boundaries, not within silos. A proactive, data-driven approach reveals service ownership gaps, exposes cross-call patterns, and highlights resilience risks. Teams prioritize actionable dashboards, precise SLIs, and rapid fault isolation to sustain freedom while reducing friction in complex architectures.

Practical Patterns for Production Readiness

The approach emphasizes measurable SLIs, automated fault injection, and fast rollback capabilities.

Teams cultivate scaling resilience through capacity planning, load testing, and adaptive autoscaling.

An incident culture drives postmortems, data-driven improvements, and continuous instrumentation, elevating trust and freedom to evolve systems confidently.

Frequently Asked Questions

How Do We Measure User-Perceived Latency Across Services?

Latency attribution is achieved through end-to-end tracing and user-centric SLIs, while service correlation maps request paths. The approach is proactive, data-driven, and resilient, enabling freedom-seeking teams to quantify user-perceived latency across services accurately.

What Are Anti-Patterns That Damage Observability Quality?

Anti patterns erode observability by masking issues; data gaps persist, and correlations falter. Investigations reveal fragile instrumentation, inconsistent traces, and brittle dashboards. A proactive, data-driven stance seeks redundancy, standardization, and resilient instrumentation to close gaps and reduce blind spots.

How Should Security and Privacy Be Integrated Into Observability Data?

Security and privacy should be integrated via privacy labeling, data minimization, and robust security controls, including encryption at rest and in transit; proactive, data-driven governance ensures resilient observability while preserving freedom and trust.

See also: No-Code Workflow Automation

When Should We Invest in Synthetic Monitoring Vs Real-User Monitoring?

Like a compass swinging between storms, decision makers stagger. Invest in synthetic monitoring when control, speed, and risk-free testing matter; real user monitoring thrives when authenticity, experience, and continuous feedback drive resilient, freedom-loving product optimization.

How to Evolve Observability Culture Across Teams and Orgs?

A proactive, data-driven approach evolves observability culture by enabling culture alignment through shared metrics, dashboards, and incident playbooks, while clarifying data ownership; teams resist silos and pursue autonomy, resilience, and freedom within cross-functional governance.

Conclusion

In pursuit of perpetual reliability, observability offers ongoing, data-driven discipline. By measuring metrics, tracing traces, logging logs, and eventing events, teams uncover hidden bottlenecks and boundary-crossing faults before they bite. Proactive practices propel performance, promote resiliency, and provide dashboards that depict dependable deployments. With disciplined experimentation and cross-team collaboration, organizations convert chaos into clarity, avert outages, and accelerate recovery. Persistent, practical patterns empower production readiness, delivering decisive, durable decisions through a culture of continuous improvement.