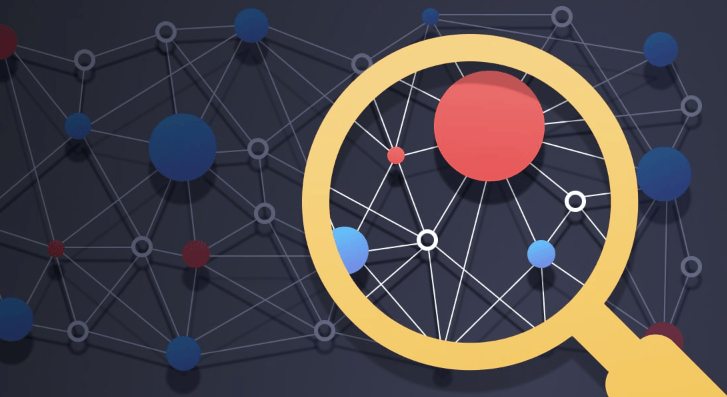

Object Detection Technology

Object detection technology identifies and localizes predefined object classes within images or videos, producing bounding boxes and class labels for measurable performance. Built on end-to-end convnets, region-based methods, and probabilistic reasoning, it relies on feature pyramids and anchor mechanisms to handle scale variation. Real-world deployment requires balancing speed and accuracy, addressing biases and privacy, and adhering to rigorous benchmarks. The framework offers broad opportunities while inviting careful governance and ongoing evaluation.

What Is Object Detection and Why It Matters

Object detection is the task of identifying and localizing instances of predefined object classes within an image or video, typically by outputting bounding boxes and corresponding class labels.

In this section, object detection is framed as a measurable capability within computer vision, yielding repeatable results.

The focus is empirical evaluation, robustness, and definable performance metrics guiding practical deployment and freedom to explore scalable, verifiable detection systems.

Core Techniques Driving Modern Detectors

Core techniques in modern detectors build on end-to-end convolutional architectures, region-based processing, and probabilistic reasoning to achieve accurate localization and classification. Feature pyramids enable multi-scale resilience, while anchor based designs provide structured candidate regions.

Empirical comparisons reveal trade-offs between speed and precision, with robust detectors leveraging fused features and regression refinements to maintain consistent performance across diverse datasets and challenging scenarios.

Real-World Applications and Use Cases

Real-world deployment of object detection systems spans diverse domains, including autonomous navigation, surveillance, and industrial automation, where timely and reliable localization and classification of objects are essential for decision-making.

Empirical deployments reveal performance varies with sensor quality, scene dynamics, and annotation accuracy, informing scalability strategies.

Privacy concerns and deployment scalability frame integration, benchmarking, and compliance, guiding measurements, validations, and transferable results across heterogeneous environments.

See also: No-Code Workflow Automation

Challenges, Ethics, and Privacy Considerations

Despite rapid advances in detection accuracy and speed, multiple challenges constrain practical deployment, including dataset biases, model robustness under diverse conditions, and the interpretability of decisions.

This analysis examines privacy concerns and bias mitigation as integral elements: data collection, consent, and oversight frameworks; methodological safeguards; and empirical evaluation of fairness, transparency, and accountability in real-world sensing systems, guiding responsible deployment.

Frequently Asked Questions

Can Object Detection Run in Real-Time on Mobile Devices?

Yes, object detection can run in real-time on mobile devices. Real time mobile deployments rely on optimized models and edge inference, balancing latency, accuracy, and energy use to enable responsive on-device performance under constrained resources.

How Do Detectors Handle Occluded Objects or Crowded Scenes?

Glancing through fog, detectors leverage temporal cues and multi-view fusion for occlusion handling, maintaining stability in crowded scenes. They dilute ambiguity with priors, track partial evidence, and re-identify objects, balancing precision, recall, and computational constraints in real-world freedom.

What Evaluation Metrics Best Reflect Deployment Needs?

The best evaluation metrics reflect deployment constraints, balancing accuracy, precision, and recall against latency tradeoffs; model calibration ensures reliable confidence estimates, while practical metrics like mAP, F1, and real-time throughput capture operational viability in constrained environments.

How to Choose Between One-Stage and Two-Stage Detectors?

In deciding, one-stage detectors offer speed with higher single-stage variance, while two-stage detectors provide accuracy via cautious refinement; thus, a two stage tradeoffs, or a single stage variance, governs the balance between latency and precision.

What Are Common Failure Modes in Diverse Environments?

Common failure modes include degraded accuracy under occlusion handling, challenging lighting, and scale variation; in diverse environments, crowded scenes exacerbate misses and false positives, demanding robust feature matching, temporal consistency, and context-aware fusion for reliable performance.

Conclusion

Object detection technology, while often framed as a neutral tool, quietly enhances perception across domains by reliably identifying and localizing objects with measured accuracy. As performance improves, ethical safeguards and privacy considerations must mature in tandem, ensuring robustness and fairness without overreaching. When deployed with transparent benchmarks and governance, the technology can advance safety, efficiency, and insight, while maintaining trust and accountability in real-world systems.